Time to Revise Relativity?: Part 2

November 29, 2011 1 Comment

In “Time to Revise Relativity: Part 1”, I explored the idea that Faster than Light Travel (FTL) might be permitted by Special Relativity without necessitating the violation of causality, a concept not held by most mainstream physicists.

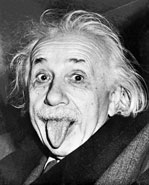

The reason this idea is not well supported has to do with the fact that Einstein’s postulate that light travels the same speed in all reference frames gave rise to all sorts of conclusions about reality, such as the idea that it is all described by a space-time that has fundamental limits to its structure. The Lorentz factor is a consequence of this view of reality, and so it’s use is limited to subluminal effects and is undefined in terms of its use in calculating relativistic distortions past c.

So then, what exactly is the roadblock to exceeding the speed of light?

Yes, there may be a natural speed limit to the transmission of known forces in a vacuum, such as the electromagnetic force. And there may certainly be a natural limit to the speed of an object at which we can make observations utilizing known forces. But, could there be unknown forces that are not governed by the laws of Relativity?

The current model of physics, called the Standard Model, incorporates the idea that all known forces are carried by corresponding particles, which travel at the speed of light if massless (like photons and gluons) or less than the speed of light if they have mass (like gauge bosons), all consistent with, or derived from the assumptions of relativity. Problem is, there is all sorts of “unfinished business” and inconsistencies with the Standard Model. Gravitons have yet to be discovered, Higgs bosons don’t seem to exist, gravity and quantum mechanics are incompatible, and many things just don’t have a place in the Standard Model, such as neutrino oscillations, dark energy, and dark matter. Some scientists even speculate that dark matter is due to a flaw in the theory of gravity. So, given the incompleteness of that model, how can anyone say for certain that all forces have been discovered and that Einstein’s postulates are sacrosanct?

Given that barely 100 years ago we didn’t know any of this stuff, imagine what changes to our understanding of reality might happen in the next 100 years. Such as these Wikipedia entries from the year 2200…

– The ultimate constituent of matter is nothing more than data

– A subset of particles and corresponding forces that are limited in speed to c represent what used to be considered the core of the so-called Standard Model and are consistent with Einstein’s view of space-time, the motion of which is well described by the Special Theory of Relativity.

– Since then, we have realized that Einsteinian space-time is an approximation to the truer reality that encompasses FTL particles and forces, including neutrinos and the force of entanglement. The beginning of this shift in thinking occurred due to the first superluminal neutrinos found at CERN in 2011.

So, with that in mind, let’s really explore a little about the possibilities of actually cracking that apparent speed limit…

For purposes of our thought experiments, let’s define S as the “stationary” reference frame in which we are making measurements and R as the reference frame of the object undergoing relativistic motion with respect to S. If a mass m is traveling at c with respect to S, then measuring that mass in S (via whatever methods could be employed to measure it; energy, momentum, etc.) will give an infinite result. However, in R, the mass doesn’t change.

What if m went faster than c, such as might be possible with a sci-fi concept like a “tachyonic afterburner”? What would an observer at S see?

Going by our relativistic equations, m now becomes imaginary when measured from S because the argument in the square root of the mass correction factor is now negative. But what if this asymptotic property really represents more of an event horizon than an impenetrable barrier? A commonly used model for the event horizon is the point on a black hole at which gravity prevents light from escaping. Anything falling past that point can no longer be observed from the outside. Instead it would look as if that object froze on the horizon, because time stands still there. Or so some cosmologists say. This is an interesting model to apply to the idea of superluminality as mass m continues to accelerate past c.

From the standpoint of S, the apparent mass is now infinite, but that is ultimately based on the fact that we can’t perceive speeds past c. Once something goes past c, one of two things might happen. The object might disappear from view due to the fact that the light that it generated that would allow us to observe it can’t keep up with its speed. Alternatively, invoking the postulate that light speed is the same in all reference frames, the object might behave like it does on the event horizon of the black hole – forever frozen, from the standpoint of S, with the properties that it had when it hit light speed. From R, everything could be hunky dory. Just cruising along at warp speed. No need to say that it is impossible because mass can’t exceed infinity, because from S, the object froze at the event horizon. Relativity made all of the correct predictions of properties, behavior, energy, and mass prior to light speed. Yet, with this model, it doesn’t preclude superluminality. It only precludes the ability to make measurements beyond the speed of light.

That is, of course, unless we can figure out how to make measurements utilizing a force or energy that travels at speeds greater than c. If we could, those measurements would yield results with correction factors only at speeds relatively near THAT speed limit.

Let’s imagine an instantaneous communication method. Could there be such a thing?

One possibility might be quantum entanglement. John Wheeler’s Delayed Choice Quantum Eraser experiment seems to imply non-causality and the ability to erase the past. Integral to this experiment is the concept of entanglement. So perhaps it is not a stretch to imagine that entanglement might embody a communication method that creates some strange effects when integrated with observational effects based on traditional light and sight methods.

What would the existence of that method do to relativity? Nothing, according to the thought experiments above.

There are, however, some relativistic effects that seem to stick, even after everything has returned to the original reference frame. This would seem to violate the idea that the existence of an instantaneous communication method invalidates the need for relativistic correction factors applied to anything that doesn’t involve light and sight.

For example, there is the very real effect that clocks once moving at high speeds (reference frame R) exhibit a loss of time once they return to the reference frame S, fully explained by time dilation effects. It would seem that, using this effect as a basis for a thought experiment like the twin paradox, there might be a problem with the event horizon idea. For example, let us imagine Alice and Bob, both aged 20. After Alice travels at speed c to a star 10 light years away and returns, her age should still be 20, while Bob is now 40. If we were to allow superluminal travel, it would appear that Alice would have to get younger, or something. But, recalling the twin paradox, it is all about the relative observations that were made by Bob in reference frame S, and Alice, in reference frame R, of each other. Again, at superluminal speeds, Alice may appear to hit an event horizon according to Bob. So, she will never reduce her original age.

But what about her? From her perspective, her trip is instantaneous due to an infinite Lorentz contraction factor; hence she doesn’t age. If she travels at 2c, her view of the universe might hit another event horizon, one that prevents her from experiencing any Lorentz contraction beyond c; hence, her trip will still appear instantaneous, no aging, no age reduction.

So why would an actual relativistic effect like reduced aging, occur in a universe where an infinite communication speed might be possible? In other words, what would tie time to the speed of light instead of some other speed limit?

It may be simply because that’s the way it is. It appears that relativistic equations may not necessarily impose a barrier to superluminal speeds, superluminal information transfer, nor even acceleration past the speed of light. In fact, if we accept that relativity says nothing about what happens past the speed of light, we are free to suggest that the observable effects freeze at c. Perhaps traveling past c does nothing more than create unusual effects like disappearing objects or things freezing at event horizons until they slow back down to an “observable” speed. We certainly don’t have enough evidence to investigate further.

But perhaps CERN has provided us with our first data point.