Comments on the Possibilist Transactional Interpretation of Quantum Mechanics, aka Models vs. Reality

September 30, 2015 6 Comments

Reality is what it is. Everything else is just a model.

From Plato to Einstein to random humans like myself, we are all trying to figure out what makes this world tick. Sometimes I think I get it pretty well, but I know that I am still a product of my times, and therefore my view of reality is seen through the lens of today’s technology and state of scientific advancement. As such, I would be a fool to think that I have it all figured out. As should everyone else.

At one point in our recent past, human scientific endeavor wasn’t so humble. Just a couple hundred years ago, we thought that atoms were the ultimate building blocks of reality and everything could be ultimately described by equations of mechanics. How naïve that was, as 20th century physics made abundantly clear. But even then, the atom-centric view of physics was not reality. It was simply a model. So is every single theory and equation that we use today, regardless of whether it is called a theory or a law: Relativistic motion, Schrodinger’s equation, String Theory, the 2nd Law of Thermodynamics – all models of some aspect of reality.

We seek to understand our world and derive experiments that push forward that knowledge. As a result of the experiments, we define models to best fit the data.

One of the latest comes from quantum physicist Ruth Kastner in the form of a model that better explains the anomalies of quantum mechanics. She calls the model the Possibilist Transactional Interpretation of Quantum Mechanics (PTI), an updated version of John Cramer’s Transactional Interpretation of Quantum Mechanics (TIQM, or TI for short) proposed in 1986. The transactional nature of the theory comes from the idea that the wavefunction collapse behaves like a transaction in that there is an “offer” from an “emitter” and a “confirmation” from an “absorber.” In the PTI enhancement, the offers and confirmations are considered to be outside of normal spacetime and therefore the wavefunction collapse creates spacetime rather than occurs within it. Apparently, this helps to explain some existing anomalies, like uncertainty and entanglement.

This is all cool and seems to serve to enhance our understanding of how QM works. However, it is STILL just a model, and a fairly high level one at that. And all models are approximations, approximating a description of reality that most closely matches experimental evidence.

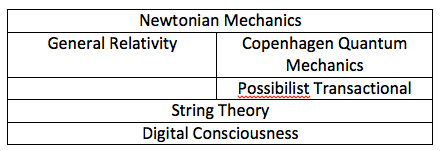

Underneath all models exist deeper models (e.g. string theory), many as yet to be supported by real evidence. Underneath those models may exist even deeper models. Consider this layering…

Every layer contains models that may be considered to be progressively closer to reality. Each layer can explain the layer above it. But it isn’t until you get to the bottom layer that you can say you’ve hit reality. I’ve identified that layer as “digital consciousness”, the working title for my next book. It may also turn out to be a model, but it feels like it is distinctly different from the other layers in that, by itself, it is no longer an approximation of reality, but rather a complete and comprehensive yet elegantly simple framework that can be used to describe every single aspect of reality.

For example, in Digital Consciousness, everything is information. The “offer” is then “the need to collapse the wave function based on the logic that there is now an existing conscious observer who depends on it.” The “confirmation” is the collapse – the decision made from probability space that defines positions, spins, etc. This could also be seen as the next state of the state machine that defines such behavior. The emitter and absorber are both parts of the “system”, the global consciousness that is “all that there is.” So, if experimental evidence ultimately demonstrates that PTI is a more accurate interpretation of QM, it will nonetheless still be a model and an approximation. The bottom layer is where the truth is.

Elvidge’s Postulate of Countable Interpretations of QM…

The number of intepretations of Quantum Mechanics always exceeds the number of physicists.

Let’s count the various “interpretations” of quantum mechanics:

- Bohm (aka Causal, or Pilot-wave)

- Copenhagen

- Cosmological

- Ensemble

- Ghirardi-Rimini-Weber

- Hidden measurements

- Many-minds

- Many-worlds (aka Everett)

- Penrose

- Possibilist Transactional (PTI)

- Relational (RQM)

- Stochastic

- Transactional (TIQM)

- Von Neumann-Wigner

- Digital Consciousness (DCI, aka Elvidge)

Unfortunately you won’t find the last one in Wikipedia. Give it about 30 years.